No Real Child Was Harmed:

Can AI-Generated Images Make Nonprofit Communications More Ethical?

Cover image attribution:

Nadia Piet & Archival Images of AI + AIxDESIGN / Better Images of AI / Ways of Seeing /Licensed by CC-BY 4.0.

Visual imagery is an essential part of how nonprofits, humanitarian organisations and charities communicate with their audience, donors, and partners. Through strategically chosen photography, organisations can visualise the scope of their work, bring attention to their mission, create a coherent visual identity and establish themselves as trustworthy and legitimate in the public eye. Images play an increasingly important role in digital storytelling, shaping awareness and perception of human rights issues worldwide and expressing what words or statistics often cannot. They summon emotions: sympathy, indignation, despair, anger. These emotions, unstable as they may be, are frequently the conditions of action. They are what move people to give, share, protest, and care. For organisations dependent on public support, the image is therefore not merely decorative—it is operational.

It is precisely because images hold such power that they must be handled with exceptional care. Nonprofits and charities often occupy a public position as moral actors, institutions presumed to be working on behalf of justice, dignity, and repair. This makes the ethics of representation central to their work, particularly when the people represented belong to vulnerable or marginalised groups. In such contexts, the obligation to do no further harm should be foundational. Yet, the line between help and harm is often blurry–even when intentions are good.

Humanitarian and nonprofit photography has long been criticised for relying on images of suffering – particularly images of children in vulnerable or precarious situations – to provoke emotional response. While this trope is still used by many organisations, there has already been a great deal of critical work on it, and improvements have been made. Nevertheless, a new factor has now entered the field of nonprofit communication: generative Artificial Intelligence (AI). This represents a particularly salient challenge, as, like noted by Ka Man Parkinson – a humanitarian communications leader and researcher in global humanitarian AI adoption – communications and marketing is often the first department affected by AI implementation.

AI-generated images appear, at first glance, to offer a possible solution to some of the ethical problems associated with photography. If an image can be created without photographing a real child, survivor, or displaced person, it may seem to reduce risks around consent, privacy, exposure, and retraumatisation. It’s also a significantly cheaper and faster option to create uniform-looking materials, which makes it quite tempting for organisations with already scarce funds. At the same time, AI-generated imagery introduces a different set of problems: it can fabricate suffering, obscure the boundary between documentation and invention, reinforce visual stereotypes, and undermine public trust in nonprofit communications.

This article aims to investigate this emerging issue, examining whether AI-generated images can be used ethically in nonprofit communications and under what conditions (if any) they can serve the public good. The focus is on images of children, because children are among the most frequently represented, yet also most vulnerable subjects in nonprofit and humanitarian campaigns. To examine this question, the article combines theory and practice. It draws on existing research and criticism around visual ethics, humanitarian photography, and responsible AI, while also turning to conversations with those who meet these questions in their daily work: practitioners in nonprofit and humanitarian communications, photographers, and professionals working on AI ethics.

Photography, Power, and the Vulnerable Subject

The idea that documentary photography can be a source of harm is central to postcolonial critiques of visual representation. As argued by Teju Cole, it is a part of a longer history in which colonised and dispossessed people were made visible to others: classified, studied, exhibited, pitied, and consumed. It helped produce the visual categories through which colonised people were understood by colonial administrations, missionaries, scientists, journalists, and later humanitarian organisations. As the Zimbabwean novelist Yvonne Vera put it:

The camera has often been a dire instrument. In Africa, as in most parts of the dispossessed, the camera arrives as part of the colonial paraphernalia, together with the gun and the bible, diarising events, the exotic and the profound, altering reality, introducing new impulses and confessions, cataloguing the converted and the hanged.

This matters for contemporary nonprofit and humanitarian communications because many of its visual conventions inherit colonial ways of seeing. Images of the Global South, refugees, children, and disaster-affected communities are often produced for distant audiences who are asked to feel concern, compassion, outrage, or pity. Even when the purpose is advocacy or fundraising, the structure of looking remains unequal: one person’s suffering becomes another person’s moral encounter.

In the nonprofit and charity environment, there seems to be a particular justification for this: you need to show the challenging and dire realities of a given population, to make people aware, to make them care, and to make them want to do something to help those less fortunate than them (i.e. donate, spread awareness, incentivise political action, etc.). As discussed by Claire Moon, this knowledge-action nexus is an assumption central to the practice of human rights reporting, human rights communication, and the human rights movement in general.

Indeed, throughout history, there have been photos that shifted global perspectives on conflict and human rights by showing evidence of atrocities and injustices and mobilising collective empathy. One photo celebrated for being a watershed moment in people’s collective consciousness is Nick Ut’s Napalm Girl (1972) photo, which captures a 9-year-old Phan Thị Kim Phúc running naked towards the camera after a napalm attack—an image credited with shifting the global public opinion against the Vietnam War, highlighting the raw, human cost of war on innocent victims. Yet the very force of the photograph also reveals the ethical tension at the centre of humanitarian imagery: its power depends, in part, on the exposure of a vulnerable child at the worst moment of her life.

Another example is a now-famous photograph taken in September 2015 by Turkish photojournalist Nilüfer Demir, depicting the body of a drowned 3-year-old Syrian Kurdish boy, Alan Kurdi, lying face down on a Turkish beach. Alan had drowned with his mother, Rehana, and his brother, Galib, after the boat carrying them from Turkey towards Greece capsized. The photograph of Alan’s tiny, lifeless body moved rapidly through newspapers, television broadcasts, and social media. It became, almost immediately, a visual symbol of the refugee crisis, provoking moral outrage around the politics of displacement.

On the other hand, it appears today that we are inundated with images of war, violence, and suffering, jumping out at us from our smartphones first thing in the morning. While this is particularly acute in the age of social media, this problem already troubled Stanley Cohen in 2001. He opens States of Denial with a vignette of a comfortable middle-class couple, having breakfast of coffee and croissants while browsing the weekend papers, which contain a sheaf of humanitarian appeals. What does this information do to them, and what do they do with it?—Cohen asks.

Cohen’s later research demonstrates that recipients of humanitarian communication tend to engage in complex strategies of denial to avoid responding to such appeals. Instead of a surge of motivation to act, a feeling of detachment, denial and self-justification appears, something also referred to as bystander alibis. This, as argued by Susan Sontag, is not just a result of over-exposure or indifference towards human suffering and violence, but the feeling of helplessness—a conviction that this is just what the world is like, how it always was, and even if you wished, you probably cannot do that much about it. She wrote:

The more remote or exotic the place, the more likely we are to have full frontal views of the dead and dying. […] These sights carry a double message. They show a suffering that is outrageous, unjust and should be repaired. They confirm that this is the sort of thing which happens in that place. The ubiquity of those photographs, and those horrors, cannot help but nourish belief in the inevitability of tragedy in the benighted or backward—that is, poor—parts of the world.

This collective desensitisation (also sometimes referred to, particularly in the humanitarian sector, as compassion fatigue) is perhaps one of the challenges nonprofit organisations face when trying to produce communication materials that will stop their recipients from scrolling and incentivise action. The common logic here is that the more shocking or harrowing an image is, the more likely it is to get an emotional reaction. This can be particularly tempting for organisations to use the images of children, who already constitute a vulnerable population in their own right. Coupled with challenges, such as poverty, abuse or displacement, they become figures of double vulnerability. Associated with innocence, purity, and lack of agency, it is a figure of the child that represents theideal victim trope and an ultimate humanitarian subject. In contrast to adults, who are seen as much more agentic, complex and messy, children are perceived as not having contributed to their own fate and therefore are our collective moral responsibility.

The pure effectiveness of the image of a child in distress is one of the reasons why humanitarian organisations (even those whose work scope goes beyond just children) regularly feature minors on their home pages and other key marketing materials. Children, with big eyes and swollen bellies, children in raggedy clothing, and dirt on their faces, children amid the rubble and with tears down their faces, children tragically sick or wounded —they speak to us from fundraising ads, pleading for us to take action or to donate to a cause. After all, it seems much more cruel to refuse help to a child than to an adult.

But what this imagery does is ask real children to become the visual proof of suffering – war, of hunger, of displacement, of the failure of others to protect them. Their expressions are given meanings they may never see, in campaigns, captions, and appeals written in languages they may not speak. The ethical question begins here—with consent. As Françoise Duroch and Maelle L’Homme observe, the subjects of humanitarian photography rarely get to see the images taken of them, know where those images will appear, or have any say in how they are described or used.

Steve McCurry’s image titled Afghan Girl remains perhaps the most famous example illustrating this problem. Taken in 1984 in a refugee camp near Peshawar, Pakistan, and published the following year on the cover of National Geographic, the photograph depicts eleven-year-old Sharbat Gula looking directly into the camera. The image became famous almost immediately: the young girl’s piercing green eyes, torn headscarf in a vibrant red colour, and an ambiguous expression made her, to the Western audience, synonymous with a refugee girl located in a faraway camp. A figure of tragic and mysterious beauty, vulnerable yet strong, played straight into the orientalist narrative of a Muslim woman in need of saving—a trope, significantly shaped humanitarian communications.

Becoming the world’s most famous photograph came at a cost for Sharbat. For years, the girl in the photo was nameless, known only as the Afghan Girl, as the photo’s author did not even know her name. After the image’s popularity grew, McCurry embarked on an elaborate search, which ended in Sharbat being identified through iris-recognition technology. Nearly two decades after the photograph was taken, she was living in precarity and had never seen the photo.

The image’s afterlife also followed Sharbat into later public crises. In 2016, when she was arrested in Pakistan over alleged irregularities in her refugee documentation, much of the international media coverage identified her through the old image: not first as a woman facing detention and possible deportation, but as the famous Afghan Girl. In the aftermath, she expressed that she was very upset and angry that the photograph had been taken and published without her consent. It was then revealed that her haunted eyes did not reflect the fear of war, as had been assumed, but rather the discomfort of being photographed by an unrelated man—something that, in Pashtun culture, could be seen as a violation of norms of privacy and modesty. Her case shows how humanitarian photographs can exceed the moment in which they are taken and fix a person inside a story they did not choose—and from which they may never fully escape.

This is why, even when verbal or even written consent for being photographed is given, children may not fully understand how widely an image will circulate, how long it will remain online, or how it may affect them later. More fundamentally, consent in humanitarian contexts takes place within unequal relationships, where access to aid, services, or protection may depend, whether implicitly or explicitly, on cooperation. As some humanitarian practitioners notice, it can be difficult to determine whether consent is freely given or shaped by the conditions in which it is requested—such as dependency, gratitude, or the fear of losing support. In this sense, the question is not only whether consent was obtained, but under what circumstances.

Ethical Approaches to Representing Children

At the same time, there are organisations that operate with high integrity and ethical considerations, that don’t just broadcast the struggles of vulnerable populations but crucially devise effective action plans and solutions. While it can admittedly vary between organisations, they are also often mindful of the ethical issues regarding photographic representation—they have dedicated people and carefully written policies in place, to make sure their imagery is not exploitative, with legal teams overseeing consent matters and communications teams working diligently to create strategies that balance effectiveness with ethics. They prioritise consent and dignity; they have trauma-informed teams that are trained in handling sensitive issues.

To understand how human rights concerns of representing children in nonprofit communications are understood and applied today, I have spoken to Sona Shaik, a professional in Advocacy and Communications who worked as a consultant for major nonprofits and UN system organisations. She shared that when using images of children, her primary concerns would be that of fair and dignified representation, informed and reversible consent, but also the truthfulness of it:

Does it reflect the reality on the ground? Is it accurately and appropriately aligned with and reflecting the story we want to tell?

She was also acutely aware of the aforementioned representation concerns, citing the need to avoid perpetuation of poverty porn, white saviourism and reductive tropes:

It’s important to avoid images that reduce people to the circumstances of their suffering, and to highlight people as empowered and agents of change, not just paint people as victims or passive actors.

It is good to be reminded that today, in more and more global organisations and nonprofits, these principles are at the forefront of the communication campaigns. The practice of not showing children in explicit distress or suffering, or of not showing children’s faces, is one of the common ways for organisations to protect their dignity and privacy. This is already a significant improvement in preventing children from becoming reified as symbols of suffering. Indeed, some of the most poignant photographs used in campaigns that have to do with children’s rights issues do not depict it blatantly, but in a more subtle and understated way – for example, one depicting their surroundings or objects associated with them.

Still, using documentary photography at all, when dealing with children in contexts such as abuse, trauma, sexual violence, trafficking, refugee status, criminal circumstances, and more, is always a risk, and any identifiable imagery—of their body, condition, belongings, surroundings—can put them in danger. An argument can be made that documenting these circumstances in order to use them as part of a communications campaign or to garner donor support, even when concealing a face, is still extractive.

Real Lives, Synthetic Images: The Ethics of Non-Exposure

Protecting privacy, avoiding extractive image-making, and not using real children as fundraising objects are some of the interesting arguments for the use of AI-generated imagery instead of true photography. This possibility has already been tested in documentary practice. In the BBC documentary I’m an Alcoholic: Inside Recovery, AI face-swapping was used to anonymise members of Alcoholics Anonymous. Rather than blurring or pixelating faces or relying on shots of non-identifying body parts, the technique allowed interviewees’ facial expressions to remain visible while protecting their identities. The BBC also noted that traditional anonymisation can carry unintended associations of shame, suspicion, or criminality, making contributors appear like perpetrators rather than people sharing testimony.

For nonprofits working with sensitive stories, this approach may be relevant when identity concealment is necessary but risks making the subject feel distant, faceless, or less fully human. As noted by Ka Man Parkinson,

There is a case to be made for the use of AI-generated imagery. Gülsüm Özkaya of IHH International Relief Foundation in Türkiye is conducting her MA thesis on this very question, speaking directly with people in crisis-affected communities. In a recent discussion for the Humanitarian Leadership Academy’s Fresh Humanitarian Perspectives podcast, she shared that some research participants prefer real images while others prefer AI-generated ones.

Arguing that it’s critical that the affected communities are included in discussions around ethical visual standards, Gülsüm has shared that a correlation was observed between the interviewees’ familiarity with AI tools and their approach towards AI-generated images. Research participants who used AI more frequently seemed more comfortable with synthetic visuals. This becomes important in the context of children, whose understanding of generative AI will naturally be smaller. Therefore, a question arises whether they can give informed consent to such representation. If a person has limited knowledge of how generative AI works, how images are produced, or how they might circulate, then their consent to being represented through such tools may be incomplete.

But what if an image is not meant to represent a real child, but is fully synthetic? If the child in the image does not exist, who is the subject of ethical concern? As pointed out by Ka Man Parkinson,

Even where an image is not based on a living person, it may still be a composite drawn from real photographs in training data—meaning the notion of a ‘purely synthetic’ child image may not be clear-cut.

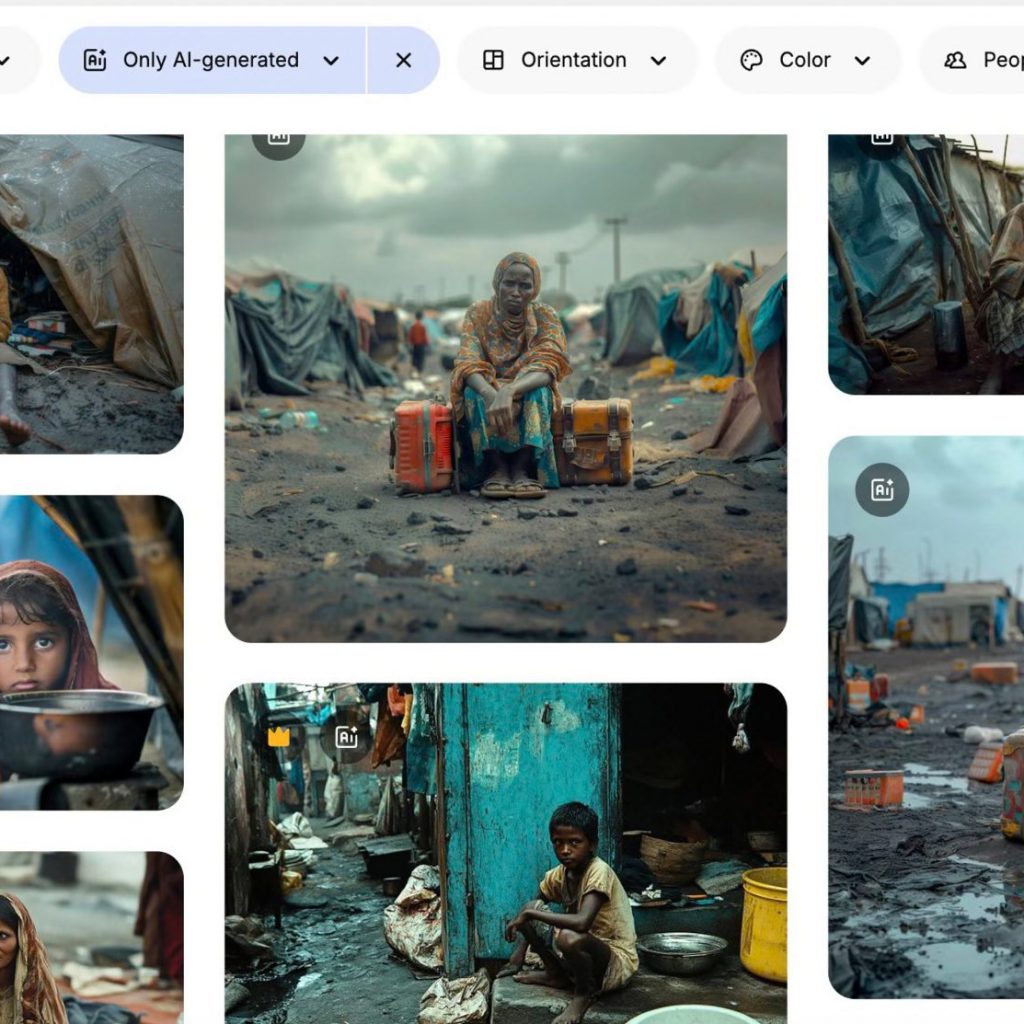

This is why, while synthetic imagery might reduce the harm of exposure, it still poses a risk of inflicting the harm of representation. As discussed in 2025 research by Arseni Alenichev and colleagues, AI technology is already used to mirror the exact stereotypes that ethical guidelines were designed to prevent—including hyper-emotive images of starving children and Black and Brown bodies in various states of distress and suffering. This, as pointed by Anisa Abeytia, is because AI is not a neutral technology: It will reflect and often amplify the biases that already exist: racism, exclusion, inequality. If those are the defaults in our systems, they will become the defaults in AI.

Image source: The Guardian

As noted by Stephany de Cohen, Because no ‘real person’ is involved, some organisations use AI as a loophole; a justification to recreate exploitative tropes they would avoid with real subjects.

A similar concern has been voiced by Ka Man:

When it comes to children specifically, generating photorealistic images raises ethical questions and operational grey areas and risks. With photography, a child’s caregivers can provide informed consent and withdraw permission at any time. With AI-generated images, once entered into circulation, there is no clear course of redress that I’m aware of if it is modified in inappropriate or harmful ways.

This dark aspect is something nonprofits should have in mind. Sadly, generative AI has already been implemented by bad actors towards the creation of a vast amount of non-consensual nudity and deepfake pornography, including AI-generated Child Sexual Abuse Materials (CSAM). As documented in the October 2023 report on AI CSAM by the Internet Watch Foundation (IWF), such images (now so realistic they are very often indistinguishable from real CSAM) often feature the faces of known, real victims, de-aged faces of celebrities, and famous children, as well as nudifying children whose clothed images have been uploaded online in legitimate contexts. The proliferation of such content is growing rapidly, offering a way for perpetrators to profit from child sexual abuse.

This dark side of AI is something I also talked about with Anisa:

When we talk about AI-generated images of children, we also have to think about what we are normalising. Do we really want to create more images of children — especially vulnerable children — for circulation? – She pointed out.– Children should be protected as a starting point. The question is not only whether we can generate these images, but whether we should. Once that door is open, it becomes very difficult to control how the technology is used, or misused. We tend to focus on immediate benefits, but we don’t always ask what happens next — how it might be used in ways we didn’t intend. We’ve seen that pattern before. Technologies don’t stay confined to their original purpose.

The question of deceptively realistic AI images is problematic also in other ways – such visuals, unless very clearly labelled, could mislead audiences and lead to discrediting the organisation’s work and loss of trust. To alleviate the concerns of misinformation, non-realistic images could be a better alternative. This point is also something that Ka Man Parkison brought up:

It is worth considering stylistic alternatives to photorealism – illustration, graphics and other non-realistic representations. Elrha has made a case for hand-drawn and cartoon imagery as a thoughtful middle ground. The right choice depends on context.

Doing More With Less

Another point can be made that, apart from the biggest organisations, most nonprofits, particularly in low- and middle-income countries, are under constant pressure in deciding how to spend their limited funds. This means that investing in communications and marketing, for example, by hiring documentary photographers to take bespoke images for each campaign, means spending less on the work on the ground. It is also something that some of the smaller organisations, such as grassroots, simply cannot afford – or at least not regularly. Without a budget for professional photography, it is also much harder to take photos that will resonate, especially if any of the risk factors are present.

One of the ways nonprofits and charities navigate marketing imagery with scarce budgets is through stock photos. While it might take some time to find the right one, there are now many good platforms that offer high-quality, realistic, diverse and inclusive stock imagery. This point is quite important, as stock imagery is often generic and impersonal, frequently leaning into tropes and stereotypes. Still, this option can be appropriate, for example, if the nonprofit wants to post an article on a given topic, shed a spotlight on something else relevant that is going on, and it doesn’t have its own adequate imagery to use. Stock visuals are comparable to AI-generated images in the way that they do not depict reality and lack authenticity. But there are situations where the point of an image is not to document a particular situation, but, e.g. to allude to it more broadly. Of course, full disclosure and transparency are needed to ensure the audience is not misled or manipulated.

AI also comes with an advantage that stock imagery does not have, which is being able to personalise an image or simply create it according to a detailed description. This, combined with the easy access, time and energy efficiency, is where AI is clearly unparalleled. Alongside its constantly growing capabilities, this is one of the reasons why it is already being used in nonprofit communications. This is something that has been discussed recently by David Girling and Deborah Adesina in a research project, Artificial Authenticity: The Rise of Images Generated by Artificial Intelligence (AI) in Charity and Development Communications, in which they analysed social campaigns using AI by some of the major organisations such as Amnesty International, Plan International and the World Wildlife Fund. One of the interesting observations they made, regarding the response to these campaigns, is that what drew the most comments was not the humanitarian issues at stake, but the AI-generated nature of the images attached to them. This has been particularly visible in the case of WWF Denmark, which faced public scrutiny for its use of AI tools in a sustainability campaign, with its audience voicing concerns about a clash of values.

Image: WWF Denmark The Hidden Cost Campaign created using ChatGPT by an AI designer Nikolaj Lykke Viborg

Is there a way to mitigate this backlash, for example, by increasing transparency about AI use and refining the output to obtain the best quality possible? While full disclosure – sharing not just a clear statement that AI was used, but optimally also adding an alt text to images disclosing the tool and AI prompt used, or signposting your AI policy – is important to elevate the ethical standpoint of the practice, this in itself is not enough. Girling and Adesina point to a larger scepticism at play:

What is particularly striking is that labelling images as AI-generated did not help much. Even when organisations were transparent — 85% of images in the study were properly disclosed — audiences still shifted into a critical, sceptical mode. Rather than being moved by a cause, they became investigators, scrutinising images for flaws and questioning the ethics of the technology itself.

What, then, are the ethics of the technology itself?

Who Pays The Price For AI Visuals?

While AI can propose innovative solutions, many people oppose the use of this technology altogether – in the nonprofit world and beyond. One of the cited reasons is the fact that relying on new technologies to replace human labour in those organisations threatens the livelihoods of human professionals. To gauge that, I have spoken with a frontline journalist and photographer, Thomas Noonan, whom I asked how he feels about the use of AI-generated images in nonprofit campaigns.

I am firmly opposed to the use of AI-generated images for any reason whatsoever due to ecological and authorship rights, he shared, noting that AI generation is essentially an imitation of someone else’s work.

His concerns reflect two of the common objections to generative AI: its ecological footprint and its relationship to authorship, consent, and cultural extraction. AI systems require significant energy and water to train and run, and the burden of that infrastructure is not evenly distributed. At the same time, many generative AI tools have been trained on vast collections of human-made images and artworks, often scraped without the knowledge, consent, compensation, or credit of the people who created them.

This is why AI-generated art is questionable not only due to its economic impact on livelihoods, but also from a symbolic and artistic standpoint. While its capabilities and quality are improving rapidly and can produce impressive results in some contexts, much of this content remains generic, derivative, or aesthetically hollow. Used at such a scale, synthetic imagery threatens the broader cultural ecosystem by crowding out original, human-made work and normalising a visual culture detached from lived experience, authorship, and accountability.

This does not mean that every use of generative AI is automatically unjustifiable. Such so-called AI for Good applications, encompassing generating medical research insights, improving disaster early-warning systems, or accessibility tools, are for sure easier to justify than generating endless disposable images or low-quality automated slop.

AI can absolutely be useful. It can solve real problems – Anisa Abeytia acknowledges, as she tells me about her work with an NGO channelling generative AI to help women in Uganda access information about reproductive health. – But it comes with costs — environmental, social, political. If we’re using AI-generated images at scale, we also have to ask: what does that cost the planet? Does the purpose we want to implement AI for justify its use?

That might be the core issue: people discuss whether AI can have positive uses, but that’s not the right question—we know it can. The question that matters more is whether each use is necessary, proportionate, and honest about the human, cultural, and environmental costs it carries.

Conclusion

The introduction of AI-generated images into the realm of nonprofit communications is a new and complicated challenge. Entering a field already marked by difficult questions around visibility, dignity, consent, and power, it makes them even harder to answer. But while the use of technology is proliferating at a rapid pace, public understanding of it – including the ethical dilemmas and possible repercussions – is not as swift. This is why it is imperative now for nonprofits, charities, and humanitarian organisations to pay attention, develop digital and AI literacy, and think critically about how new technologies are used in their organisations.

The humanitarian sector has something important to offer here. It already works with governance, ethics, and accountability in complex, fast-changing environments. AI development isn’t so different in that sense. Instead of just adopting new technologies wholesale, or rejecting them outright, humanitarian organisations could help shape how they’re used – grounding them in principles like inclusion, participation, and responsibility. That’s where the conversation needs to start.

For better or worse, AI has already entered the sector, and its future likely depends on how we deal with it. Therefore, it is up to them to spearhead responsible and ethical AI use, leading the conversations on concerns and boundaries.

While there could be a case for the use of AI-generated imagery in nonprofit communications, such use can only be ethical when it’s transparent, carefully governed, culturally reviewed and not used as a shortcut to mislead or manipulate emotion. It also cannot replace photography in its documentary or artistic purpose – it does not evoke trust in the same way; it won’t show people the reality on the ground or evidence of the organisation’s work. But perhaps it could be used to supplement it, and there is a place for it in communications strategies. Such use makes sense when the aim is to reduce harm – to protect children’s privacy and avoid the extraction of real suffering, to represent sensitive issues without exposing their victims, or to allow smaller and resource-constrained organisations to communicate more effectively. As concisely encapsulated by Ka Man Parkinson,

Whatever the approach – photography or AI – the red line remains the same: are individuals, and children in particular, depicted with dignity? AI gives us more creative choices, but it does not reduce our ethical responsibilities. That means no reductive depictions, no stereotypes, no dehumanising portrayals. It means keeping a human-in-the-loop – ideally working with people from the communities being portrayed to ensure imagery is sensitive, accurate and respectful.

Author: Barbara Listek